Centaurs are mythological creatures with a human’s upper body, and a horse’s lower body. They symbolize a union of human intellect and animal strength. In AI technology, Centaurs refers to a type of hybrid usage of generative AI that combines human and AI capabilities. It does so by maintaining a clear division of labor between the two, like a centaur’s divided body. The Cyborgs by contrast have no such clear division and the human and AI tasks are closely intertwined.

Centaur idea by Ralph Losey and execution in watercolor by his AI, ChatGPT4 Visual Muse version.

Centaur idea by Ralph Losey and execution in watercolor by his AI, ChatGPT4 Visual Muse version.

A centaur method is designed so there is one work task for the human and another for the AI. For example, creation of a strategy is typically a task done by the human alone. It is separate task for the AI to write an explanation of the strategy devised by the human. The lines between the tasks are clear and distinct, just like the dividing line between the human and horse in a Centaur.

This concept is shown by the above image. It was devised by Ralph Losey and then generated by his AI ChatGPT4 model, Visual Muse. The AI had no part in devising the strategy and no part in the idea of putting the image of a Centaur here. It was also Ralph sole idea to have the human half appear in robotic form and to use a watercolor style of illustration. The AI’s only task was to generate the image. That was the separate task of the AI. Unfortunately, it turns out AI is not good at making Centaurs, especially ones with a robot top, instead of a human head, like the following image.

Classic Centaur in Photorealistic style by Ralph Losey with ChatGPT4 Visual Muse version.

Classic Centaur in Photorealistic style by Ralph Losey with ChatGPT4 Visual Muse version.

It made this image after only a few tries. But the first image of the Centaur with a robot top was a struggle. I can usually generate the image I have in mind, often even better that what I first conceived, in just a few prompts. But here, with a half robot Centaur, it took 118 attempts to generate the desired image! I tried many, many different prompts. I even used two different image generative programs, Dall-E and Midjourney. I tried 96 times with Midjourney (it generates fast) and never could get it to make a Centaur with a robot top half. But it did make quite a few funny mistakes, and a few scary ones too. Shown below are a few of the 117 AI bloopers. I note that overall Dall-E did much better than Midjourney, which never did seems to “get it.” The one Dall-E example of a blooper is bottom right, pretty close. The rest are all by Midjourney. I especially like the robot head on the butt of the sort-of robot horse. It is the bass-ackwards version of what I requested!

After 22 tries with Dall-E I finally got it to make the image I wanted.

The point of this story is that the Centaur method failed to make the Centaur. I was forced to work very closely and directly with the AI to get the image I wanted, I was forced to switch to the Cyborg method. I did not want to, but the Cyborg method was the only way I could get the AI to make a Centaur with a robotic top. Back and forth I went, 118 times. The irony is clear. But there is a deeper lesson here that emerged from the frustration, which I will come back to in the conclusion.

Background on the Centaur and Cyborg as Images of Hybrid Computer Use

The idea to use the Centaur symbol to describe an AI method is credited to chess grand master, Garry Kasparov. He is famous in AI history for his losing battle in 1997 with IBM’s Deep Blue, He retired from chess competition immediately thereafter. Kasparov returned a few years later with computer in hand, with the idea that man and computer could beat any computer alone. It worked, a redemption of sorts. Kasparov ended up calling this Centaur team chess, where human-machine teams play each other online. It is still actively played today. Many claim it is still played at a level beyond that of any supercomputer today, although this is untested. See e.g. The Real Threat From ChatGPT Isn’t AI…It’s Centaurs (PCGamer, 2/13/23).

The use of the term Centaur was expanded and explained by Harvard Professor, Soroush Saghafian, in his article Effective Generative AI: The Human-Algorithm Centaur (Harvard DASH, 10/2023). He explains the hybrid relationship as one where the unique powers of intuition of humans are added to those of artificial intelligence. In a medical study he did at his Harvard lab with the Mayo Clinic they analyzed the results of doctors using LLM AI in a centaur-type model. The goal was to try to reduce readmission risks for a patients who underwent organ transplants.

We found that combining human experts’ intuition with the power of a strong machine learning algorithm through a human-algorithm centaur model can outperform both the best algorithm and the best human experts. . . .

In this article, we focus on recent advancements in Generative AI, and especially in Large Language Models (LLMs). We first present a framework that allows understanding the core characteristics of centaurs. We argue that symbiotic learning and incorporation of human intuition are two main characteristics of centaurs that distinguish them from other models in Machine Learning (ML) and AI.

ISoroush Saghafian, Effective Generative AI: The Human-Algorithm Centaur (Harvard DASH, 10/2023), page 2.

The Cyborg model is a slightly different in that man and machine work even more closely together. The concept of a cyborg, a mechanical man, also has its origins with the ancient Greek myths: Talos. He was supposedly a giant bronze mechanical man built by Hephaestus, the Greek god of invention, blacksmithing and volcanos. The Roman equivalent God was Vulcan, who was supposedly ugly, but there are no stories of his having pointy ears. You would think that techies might seize upon the name Vulcan, or Talos, to symbolize the other method of hybrid AI use, where tasks are closely connected. But they did not, they went with the much more modern day term – Cyborg.

The word was first coined in 1960 (before StarTrek) by two dreamy AI scientists who combined the root words CYBernetic and ORGanism to describe a being with both organic and biomechatronic body parts. Here is Ralph Losey’s image of a Cyborg, which, again ironically, he created quickly with a simple Centaur method in just a few tries. Obviously the internet, which trained these LLM AIs, has many more cyborg-like android images than centaurs.

Cyborg image created in detailed photorealistic style by Ralph Losey with ChatGPT4 Visual Muse version.

Cyborg image created in detailed photorealistic style by Ralph Losey with ChatGPT4 Visual Muse version.

More On the Cyborg Method

The Cyborg method supposedly has no clear cut divisions between human and AI work, like the Centaur. Instead, Cyborg work and tasks are all closely related, like a cybernetic organism. People and ChatGPTs usual say that the Cyborg approach involves a deep integration of AI into the human workflow. The goal is a blend where AI and human intelligences constantly interact and complement each other. In contrast to the Centaur method, the Cyborg does not distinctly separate tasks between AI and humans. For instance, in Cyborg a human might start a task, and AI might refine or advance it, or vice versa. This approach is said to be particularly valuable in dynamic environments where continuous adaptation and real-time collaboration between human and AI are crucial. See e.g. Center for Centaurs and Cyborgs OpenAI GPT version (Free GPT version by Community Builder that we recommend. Try asking it more about Cyborgs and Centaurs). Also see: Emily Reigart, A Cyborg and a Centaur Walk Into an Office (NAB Amplify, 9/24/23); Ethan Mollick, Centaurs and Cyborgs on the Jagged Frontier: I think we have an answer on whether AIs will reshape work (One Useful Thing, 9/16/23).

Ethan Mollick is a Wharton Professor who is heavily involved with hands-on AI research in the work environment. To quote the second to last paragraph of his article (emphasis added):

People really can go on autopilot when using AI, falling asleep at the wheel and failing to notice AI mistakes. And, like other research, we also found that AI outputs, while of higher quality than that of humans, were also a bit homogenous and same-y in aggregate. Which is why Cyborgs and Centaurs are important – they allow humans to work with AI to produce more varied, more correct, and better results than either humans or AI can do alone. And becoming one is not hard. Just use AI enough for work tasks and you will start to see the shape of the jagged frontier, and start to understand where AI is scarily good… and where it falls short.

Ethan Mollick, Centaurs and Cyborgs on the Jagged Frontier: I think we have an answer on whether AIs will reshape work (One Useful Thing, 9/16/23).

Asleep at the Wheel

Obviously, falling asleep at the wheel is what we have seen in the hallucinating AI fake citations cases. Mata v. Avianca, Inc., 22-cv-1461 (S.D.N.Y. June 22, 2023) (first in a growing list of sanctioned attorney cases). Also see: Park v. Kim, 91 F.4th 610, 612 (2d Cir. 2024). But see: United States of America v. Michael Cohen (SDNY, 3/20/24) (Cohen’s attorney not sanctioned. “His citation to non-existent cases is embarrassing and certainly negligent, perhaps even grossly negligent. But the Court cannot find that it was done in bad faith.”)

These lawyers were not only asleep at the wheel, they had no idea what they were driving, nor that they needed a driving lesson. It is not surprising they crashed and burned. It is like the first automobile drivers who would instinctively pull back on the steering wheel in an emergency to get their horses to stop. That may be the legal profession’s instinct as well, to try to stop AI, to pull back from the future. But it is shortsighted, at best. The only viable solution is training and, perhaps, licensing of some kind. These horseless buggies can be dangerous.

Cyborg style Centaur by Ralph Losey with ChatGPT4 Visual Muse version.

Cyborg style Centaur by Ralph Losey with ChatGPT4 Visual Muse version.

Skilled legal professionals who have studied prompt engineering, either methodically or through a longer trial and error process, write prompts that lead to fewer mistakes. Strategic use of prompts can significantly reduce the number and type of mistakes. Still, surprise errors by generative AI cannot be eliminated altogether. Just look at the trouble I had generating a half robot Centaur. LLM language and image generators are masters of surprise. Still, with hybrid prompting skills the surprise results typically bring more delight than fright.

Centaur watercolor image unintentionally generated by Ralph Losey with ChatGPT4 Visual Muse version.

Centaur watercolor image unintentionally generated by Ralph Losey with ChatGPT4 Visual Muse version.

That was certainly the case in a recent study by Professor Ethan Mollick and several others on the impact of AI hybrid work. Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality (Harvard Business School, Working Paper 24-013). I will write a full article on this soon. As a quick summary, researchers from multiple schools collaborated with the Boston Consulting Group and found a surprisingly high increase in productivity by consultants using AI. The study was based on controlled tests of a AI hybrid team approach to specific consulting work tasks. The results also showed that, even though the specific work tasks tested were performed much faster, the quality was maintained, and for some consultants, increased significantly.

Although we do not have a formal study yet to prove this, it is the supposition of most everyone in the legal profession that is now using AI, that lawyers can also improve productivity and maintain quality. Of course, careful double-checking of AI work product is required to catch errors to maintain quality. This applies not only the obvious case hallucinations, but also to what Professor Mollick called AI’s tendency to be “homogenous and same-y in aggregate” writing. Also See: Losey, Stochastic Parrots: How to tell if something was written by an AI or a human? (common “tell” words used way too often by generative AIs). Lawyers who use AI attentively, without over-delegation to AI, can maintain high quality work, meet all of their ethical duties, and still increase productivity.

The hybrid approach to use of generative AI, both Centaur and Cyborg, have been shown to significantly enhance consulting work. Many legal professionals using AI are seeing the same results in legal work. Lawyers using AI properly can significantly increase productivity and maintain quality. For most of the Boston Consulting Group consultants tested, their quality of work actually went up. There were, however, a few exceptional outliers whose test quality was already at the top. The AI did not make the work of these elite few any better. The same may be true of lawyers.

Image by Ralph Losey with ChatGPT4 Visual Muse version.

Image by Ralph Losey with ChatGPT4 Visual Muse version.

Transition form Centaur to Cyborg

Experience shows that lawyers who do not use AI properly, typically by over-delegation and inadequate supervision, may increase productivity, but do so at the price of increased negligent output. That is too high a price. Moreover, legal ethics, including Model Rule 1.1, requires competence. I conclude, along with most everyone in the legal profession, that stopping the use of AI by lawyers is futile, but at the same time, we should not rush into negligent use of this powerful tool. Lawyers should go slow and delegate to AI on a very limited basis at first. That is the Centaur approach. Again, like most everyone else, my opinion is to start slow and begin to use AI in a piecemeal fashion. For that reason you should begin now and avoid death by committee, or as lawyers like to call it, paralysis by analysis.

Then, as your experience and competence grows, slowly increase your use of generative AI and experiment with applying it to more and more tasks. You will start to be more Cyborg like. Soon enough you will have the AI competitive edge that so many outside experts over-promise.

Vendors and outside experts can be a big help in implementing generative AI, but remember, this is your legal work. For software, look at the subscription license terms carefully. Note any gaps between what marketing promises and the superseding agreements deliver. Pick and choose your generative AI software applications carefully. Use the same care in picking the tasks to begin to implement official AI usage. You know your practice and capabilities better than any outside expert offering cookie-cutter solutions.

Use the same care and intelligence in selecting the best, most qualified people in your firm or group to train and investigate possible purchases. Here the super-nerds should rule, not the powerful personalities, nor even necessarily the best attorneys. New skill sets will be needed. Look for the fast learners and the AI enthusiasts. Start soon, within the next few months.

Cyborg in watercolor style generated with easy by Ralph Losey with ChatGPT4 Visual Muse version.

Cyborg in watercolor style generated with easy by Ralph Losey with ChatGPT4 Visual Muse version.

Conclusion

According to Wharton Professor Ethan Mollick, secret use and false claims of personal work product have already begun in many large corporations. In his YouTube at 53:30 he shares a funny story of a friend in a big bank. She secretly uses AI all of the time to do her work. Ironically, she was the person selected to write a policy to prohibit the use of AI. She did as requested, but did not want to be bothered to do it herself, so she directed a GPT on her personal phone do it. She sent the GPT written policy prohibiting use of GPTs to her corporate email account and turned it in. The clueless boss was happy, probably impressed by how well it was written. Mollick claims that secret, unauthorized use of AI in big corporations is widespread.

This reminds me of the time I personally heard the GC of a big national bank, now defunct, proudly say that he was going to ban the use of email by his law department. We all smiled, but did not say no to mister big. After he left, we LOL’ed about the dinosaur for weeks. Decades later I still remember it well.

Centaur and Cyborg moving confidently together into future legal work by Ralph Losey with ChatGPT4 Visual Muse version.

Centaur and Cyborg moving confidently together into future legal work by Ralph Losey with ChatGPT4 Visual Muse version.

So do not be foolish or left behind. Proceed expeditiously, but carefully. Then you will know for yourself, from first-hand experience, the opportunities and the dangers to look out for. And remember, no matter what any expert may suggest to the contrary, you must always supervise the legal work done in your name.

There is a learning curve in the careful, self-knowledge approach, but eventually the productivity will kick in, and with no loss of quality, nor embarrassing public mistakes. For most professionals, there should also be an increase in quality, not just quantity or speed of performance. In some areas of practice, there may be both a substantial improvement in productivity and quality. It all depends on the particular tasks and the circumstances of each project. Lawyers, like life, are complex and diverse with ever changing environments and facts.

Fast moving group of tech lawyers. Midjourney photo image by Ralph Losey.

Fast moving group of tech lawyers. Midjourney photo image by Ralph Losey.

My image generation failure is a good example. I expected a Centaur like delegation to AI would result in a good image of a Centaur with a robotic top half. Maybe I would need to make a few adjustments and tries, but I never would have guessed I would have to make 118 attempts before I got it right. My efforts with Visual Muse and Midjourney are typically full of pleasant surprises, with only a few frustrating failures. (Although the failure images are sometimes quite funny.) So I was somewhat surprised to have to spend an hour to bring my desired cyber Centaur to life. Somewhat, but not totally surprised. I know from experience that just happens sometimes with generative AI. It is the nature of the beast. Some uncertainty is a certainty.

As is often the case, the hardship did lead to a new insight into the relationship between the two types of hybrid AIs — Centaur and Cyborg. I realized they are not a duality, but more of a skill-set evolution. They have different timings, purposes and require different prompting skill levels. On a learning curve basis, we all start as Centaurs. With experience we slowly become more Cyborg like. We can step in with close Cyborg processes when the Centaur approach does not work well for some reason. We can cycle in and out between the two hybrid approaches.

There is a sequential reality to first use. Our adoption of generative AI should begin slowly, like a Centaur, not a Cyborg. It should be done with detachment and separation into distinct, easy tasks. Also you should start with the most boring repetitive tasks first. See eg. Ralph Losey’s GPT model, Innovation Interviewer (work in progress, but available at the ChatGPT store).

Cyborg riding a Centaur lawyer into the future by Ralph Losey with ChatGPT4 Visual Muse version.

Cyborg riding a Centaur lawyer into the future by Ralph Losey with ChatGPT4 Visual Muse version.

Our mantra as a beginner Centaur should be a constant whisper of trust, but verify. Check the AI work, learn the mistakes and impose policy and procedures to guard against them. That is what good Centaurs do. But as personal and group expertise grows, the hybrid relations will naturally grow stronger. We will work closer and closer with AI over time. It will be safe and ethical to speed up because we will learn its eccentricities, its strengths and weaknesses. We will begin to use AI in more and more work tasks. We will slowly, but surely, transform into a cyborg work style. Still, as legal professionals, our work will be ever mindful of our duties to client and courts.

More machine attuned than before, we will become like Cyborgs, but still remain human. We will step into a Cyborg mind-set to get the job done, but will bring our intuition, feelings and other special human qualities with us.

Future lawyers wearing AI glasses in digital art style by Ralph Losey with ChatGPT4 Visual Muse version.

Future lawyers wearing AI glasses in digital art style by Ralph Losey with ChatGPT4 Visual Muse version.

I agree with Ray Kurzweil that we will ultimately merge with AI, but disagree that it will come by nanobots in your blood or other physical alterations. I think it is much more likely to come from wearables, such as special glasses and AI connectivity devices. It will be more like the 2013 movie HER, which is Sam Altman’s favorite, with an AI operating system and constant companion cell-phone (the inseparable cell phone part has already come true). It will, I predict, be more like that, than the wearables shown in the Avengers movies, the Tony Stark flying Iron Man suit.

But probably it will look nothing like either of those Hollywood visions. The real future has yet to be invented. It is in your hands.

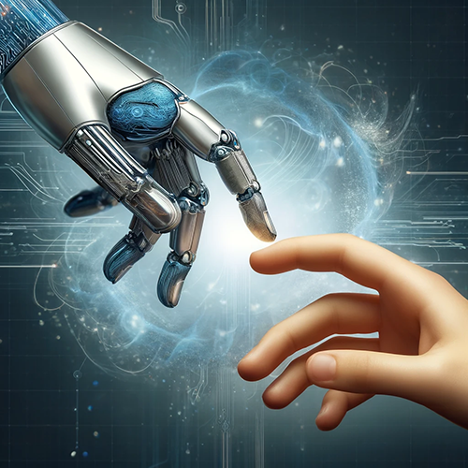

Hybrid hands creating future. By Ralph Losey with ChatGPT4 Visual Muse version.

Hybrid hands creating future. By Ralph Losey with ChatGPT4 Visual Muse version.